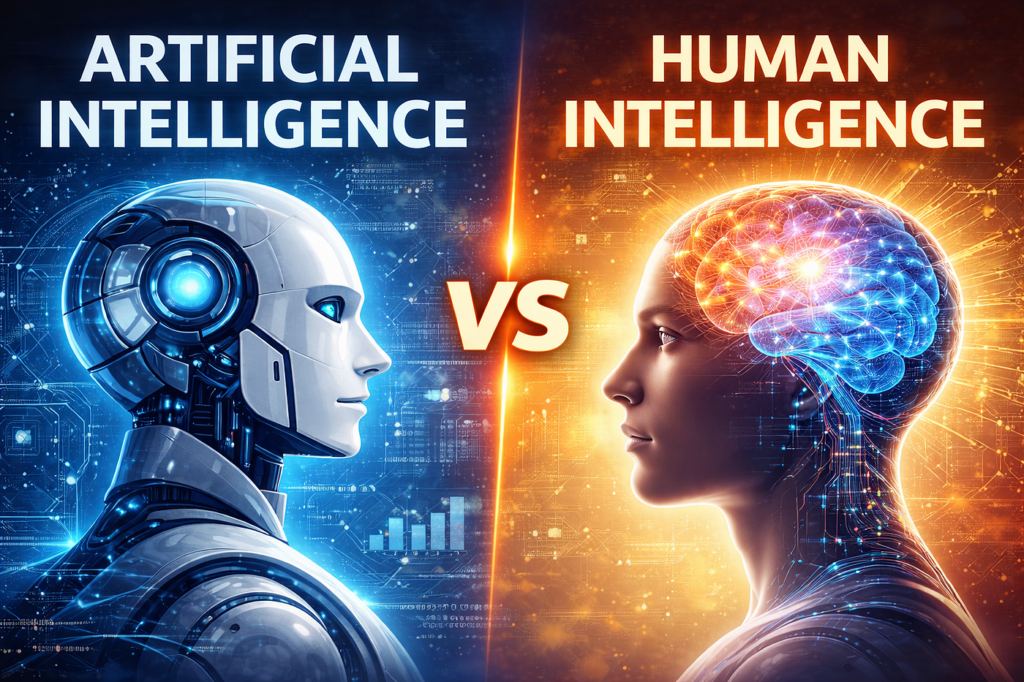

Yes — and increasingly, that’s by design. Artificial Intelligence (AI) underpins almost every aspect of social media platforms used in the UK today. From personalised adverts and content recommendations to moderation, design, and even emotional analysis, AI is the invisible hand shaping what British users see, like and believe on social platforms such as Facebook, X (Twitter), TikTok, Instagram, Snap, and YouTube.

The problem isn’t that AI exists in these spaces — it’s how much power it now holds over user experience, public opinion and even mental health.

Why AI Dominates Social Media

Profit Through Precision

Social media companies rely on advertising revenue. AI enables hyper‑personalisation — showing each user exactly what keeps them scrolling, clicking and buying. The more time you spend on your feed, the more data you generate; the more data you generate, the better the AI predicts what will hold your attention.

That circular model is extremely profitable. According to Ofcom’s 2025 “From Apps to AI Search” report, half of all time UK adults spend online is concentrated on platforms owned by Alphabet (Google/YouTube) and Meta (Facebook/Instagram). These services succeed precisely because their algorithms are efficient at exploiting user behaviour.

See our PDF download: AI For UK Sales Growth

Content Moderation at Scale

Tens of millions of posts circulate daily across UK social networks. Human moderation is impossible at that volume, so companies delegate to AI. Machine systems remove harmful or illegal content, identify misinformation and flag sensitive material. This automation is necessary — but also means that opaque algorithms determine what speech or content survives online.

Data Is the New Currency

Social media firms operate under what many call “surveillance capitalism.” Every post, like and pause on a video becomes data. AI extracts meaning from that behaviour to model personalities, predict interests and influence preferences. In short, AI runs social media because humans are the product.

Is There Too Much AI?

Erosion of User Autonomy

AI no longer simply curates content — it manipulates it. Feeds are no longer chronological or neutral; they are engineered for emotional engagement.

The outcome is often echo chambers, polarised opinions and mental exhaustion. As the British Psychological Society’s 2025 media study concluded, “AI‑driven content design undermines user autonomy by conditioning attention rather than reflecting choice.”

Advertisement

- ECHO POP – This compact Bluetooth smart speaker with Alexa features a full sound that’s great for bedrooms and small spa…

- CONTROL MUSIC WITH YOUR VOICE – Ask Alexa to play music, audiobooks and podcasts from your favourite providers like Amaz…

- MAKE ANY SPACE A SMART SPACE – Use your voice or the Alexa app to easily control your compatible smart home devices like…

Loss of Human Interaction

Increasingly, AI‑generated text, images and even avatars appear in comments or posts. “Bots” mimic real users to boost engagement or push narratives — commercial or political. For many Britons, it’s no longer clear whether they are interacting with another person or a programmed persona.

Privacy and Psychological Impact

AI surveillance extends into sentiment analysis — reading emotional cues from emojis, comments and time spent per post. That information tailors advertising with uncanny accuracy. In a 2024 Information Commissioner’s Office briefing, regulators warned that this constant behavioural profiling “blurs the line between service and psychological manipulation.”

Why This Situation Exists

Lack of Regulation

In the UK, oversight is fragmented.

- Ofcom regulates harmful content.

- The Information Commissioner’s Office (ICO) oversees data protection.

- The government’s Online Safety Act (2024) seeks to make platforms accountable, but it does not restrict the core business model that depends on AI‑driven engagement.

In short, the state wants platforms to be safe, but not unprofitable — leaving AI’s dominance largely untouched.

See our PDF download on How Can AI Help My Small UK Advertising Business

Consumer Apathy

Despite privacy concerns, people continue to use these platforms because they are essential to modern communication, employment, and social belonging. The average UK adult spends more than two hours daily on social networks. As one University of Birmingham researcher put it:

“Users know they are being manipulated — but accept it as the price of connection.”

Will It Get Worse in the Future?

Yes — Because AI Is Becoming Self‑Optimising

Next‑generation social platforms already use generative AI to create custom video content, voiceovers and even “synthetic influencers.” These models don’t just learn from user behaviour; they actively experiment on users to improve engagement metrics.

The Digital Catapult’s 2026 forecast expects up to 50% of all branded social media content in the UK to be AI‑assisted within two years. That means the lines between human creativity and machine manipulation will blur further.

Advertisement

AI Will Control Not Just What You See — But What Exists

As generative AI floods feeds with synthetic images and videos, genuine human posts risk being drowned out. Platforms like TikTok already use AI‑recommended music, filters and automated captions. In time, almost all content may be machine‑created for human consumption, making authenticity an exception rather than the norm.

Regulation Will Lag Behind

Policymakers are aware of these issues. Yet, by the time any restrictions reach legislation, platforms will have evolved beyond their scope. Most new laws — included the EU’s Artificial Intelligence Act and the UK’s upcoming AI Regulation Framework (2025‑26) — focus on risk mitigation, not on dismantling monetised manufacturing of attention.

It’s a race regulators are likely to lose.

A Real‑World View

AI’s domination of social media is not an accident — it’s a feature of the platform economy. The systems are designed to keep users engaged, not enlightened.

In the UK, this means a future where:

- Reality becomes negotiable (AI‑generated news, influencers, and imagery dominate discourse).

- Mental health deteriorates through comparison, information overload and manipulation.

- Public debate fragments, as algorithms reward emotional extremes over accuracy or civility.

And yet, usage will continue to grow. Social media companies have mastered the art of addictive optimism: convincing users that more technology equals better communication — even as it corrodes trust and individuality.

The cynical truth is that AI on social media will get worse precisely because it works. It profitably exploits human habits, and humans — for now — do not want to give it up.

Find Help and Support

We have created Professional High Quality Downloadable PDF’s at great prices specifically for Personal or Business use in the UK. Which include help and advice on understanding what Artificial Intelligence is all about and how it can improve your business. Find them here.