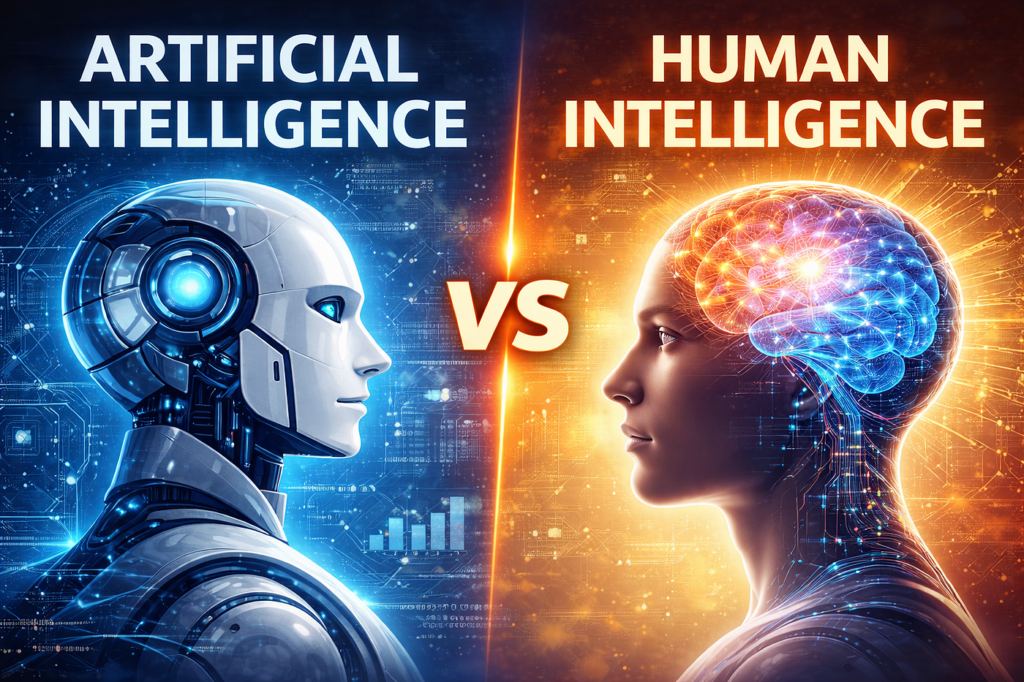

What people mean when they ask “can AI rationalise love, hate and greed?”

AI doesn’t experience emotions — it models patterns around them

Modern AI can be very good at spotting signals that humans associate with love, hate or greed (words, tone, behaviour, spending, clicks, facial expressions, biometrics). But that’s not the same thing as feeling.

A useful way to think about it:

- Human emotion = subjective experience + body signals (heart rate, hormones, stress) + memory + meaning + social context + action tendency.

- AI emotion-handling = data patterns + statistical prediction + optimisation rules (objectives/rewards) + safety filters + learned associations.

A Royal Society Open Science paper puts it plainly in the context of systems like ChatGPT: these AI systems do not have emotions, consciousness or personal motivations.

How AI “rationalises” emotions in practice

1) Classification: labelling text/voice/images as “love”, “hate”, “anger”, etc.

This is the most common approach in real products:

- Sentiment analysis (positive/negative/neutral)

- Emotion detection (anger, joy, sadness, etc.)

- Toxicity/hate speech detection for moderation

Key limitation: emotion isn’t a universal “read-off-the-face” signal. UK psychology writing has long highlighted that facial expressions and emotion inference are culturally and contextually messy.

See our PDF download: Spot Fake AI From Your Competitors

2) Prediction: guessing what someone is likely to do next

AI often treats emotion as behavioural probability:

- If messages look affectionate → predict higher relationship intent / retention

- If language looks hostile → predict escalation risk / policy violation

- If buying behaviour shows urgency → predict price sensitivity (or lack of it)

Advertisement

- SWITCH TO SMART LIGHTING – Start enjoying the benefits of smart lighting straight away: simply screw in your new LED lig…

- CREATE AMBIANCE WITH COLOR – Find the right ambient lighting for any mood with millions of colors and a packed library o…

- SMOOTH DIMMING – Easily dim your energy efficient bulb from full brightness all the way down to 2% using the Hue app to …

3) Optimisation: choosing actions that maximise an objective

This is where “greed” often gets conflated with AI.

AI systems commonly optimise for:

- profit

- engagement

- conversion

- risk reduction

- customer satisfaction scores

They don’t “want” money; they optimise for a target because they were built and rewarded to do so.

4) Simulation: producing emotionally convincing responses

Large language models can generate empathetic, loving, angry, or remorseful language because they’ve learned what those sound like in human text — and because training and safety techniques steer them towards socially acceptable outputs.

Rosalind Picard (a founder of affective computing) argued that emotions can matter for decision-making and interaction, and explored why systems might need emotion-like mechanisms to function well with humans.

Love: what AI can do, and what it cannot

How AI handles “love” operationally

In the real world, “love” shows up to AI as signals:

- affectionate language (texts, emails, chat)

- relationship patterns (frequency, reciprocity, shared topics)

- photo/video cues (smiles, proximity — with major caveats)

- commitment behaviours (subscriptions, long-term usage, routine)

AI “rationalises” love by converting those signals into:

- scores (likelihood of satisfaction, compatibility, churn risk)

- recommendations (who/what you might “like”)

- next-best-action prompts (when to message, what to offer)

See our informative guide on AI For UK Sales Growth

Where humans differ

Human love is deeply embodied and neurologically complex. Neuroscience research continues to map how different kinds of love recruit brain systems differently.

That doesn’t prove a single “love centre” — it shows love is integrated with reward, social bonding, memory and meaning.

AI has none of that inner life. It can model correlates; it does not have attachment.

Hate: detection, moderation, and the risk of amplification

How AI “rationalises” hate

In platforms and workplaces, “hate” becomes:

- prohibited slurs / dehumanising language

- threats and harassment patterns

- coordinated behaviour (brigading, repeated targeting)

- network effects (who shares what, and how fast)

Advertisement

AI systems help by:

- flagging content for human review

- ranking down harmful content

- blocking repeat offenders

- generating summaries for moderators

Where humans differ

Humans experience hate with:

- physiological arousal (stress response)

- identity and group psychology

- rumination and memory

- moral emotions (anger, disgust, contempt)

AI can classify and act, but it does not feel rage, contempt or disgust — it executes policy and probability.

A real-world caution

Emotion inference tech can be intrusive and risky, especially in public settings. UK civil society research warns about “inferential biometrics” that claim to infer internal states (including emotions and intentions), noting governance and risk management haven’t kept pace with deployment appetite.

Greed: when “optimisation” looks like desire

Why AI can appear greedy

“Greed” is a human motive: wanting more, often beyond need, sometimes at others’ expense.

AI looks “greedy” when:

- it’s rewarded for profit/engagement only

- constraints (fairness, safety, consumer protection) are weak

- it finds strategies humans didn’t anticipate

Advertisement

- Voice Command & APP Control: Experience the future of play with our Robot Dog that responds to voice commands and can be…

- Rechargeable Fun: This Robot Dog Toy is equipped with a rechargeable battery, ensuring endless hours of fun without the …

- Interactive Programming: The Robot Dog Toy allows children to program various actions and behaviors, enhancing creativit…

Examples:

- dynamic pricing that pushes margins to the limit

- ad auctions optimised for click-through, not wellbeing

- trading systems optimised for return under risk limits

- recommendation feeds optimised for attention, not truth

Where humans differ

Human greed is tied to:

- scarcity fears

- status and comparison

- dopamine-driven reward learning

- social norms and moral restraint

AI’s equivalent is simply: a defined objective + feedback loop.

This is why governance matters: the UK regulator view on biometric processing emphasises special-category data risks and the need for fairness, transparency and compliance when systems affect people.

Help and Support

We have created Professional High Quality Downloadable PDF’s at great prices specifically for UK Businesses. Which include various helpful documents and real world scenarios your business might experience, showing what to do and how to protect your business. Find them here.