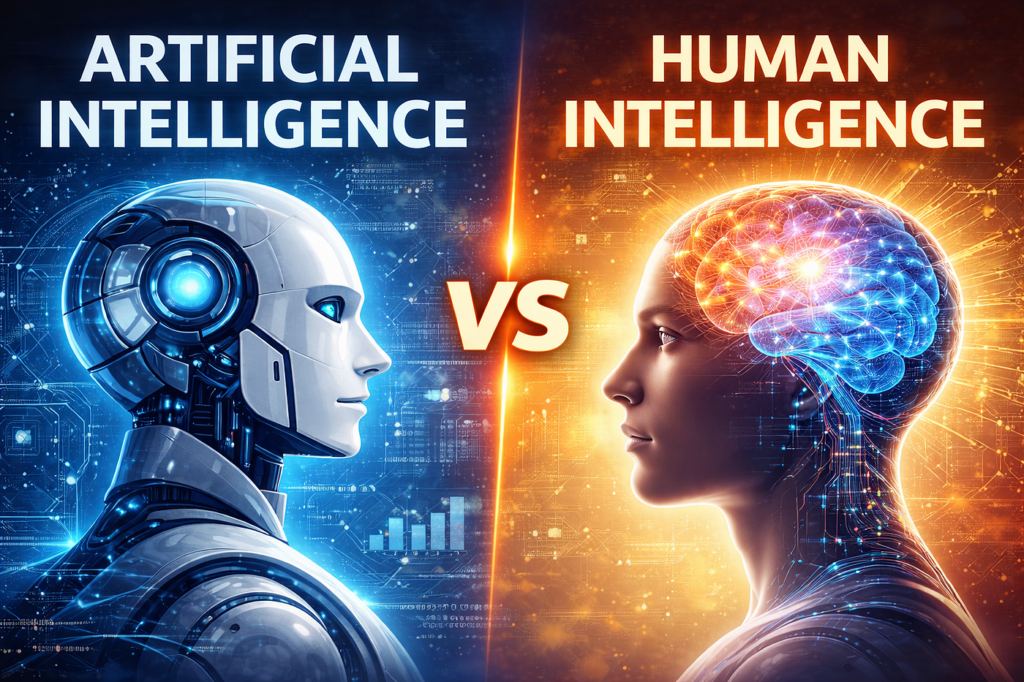

Empathy AI refers to Artificial Intelligence systems designed to recognise, interpret and respond to human emotions — through voice, facial expressions, word choice, or behavioural data.

It’s not genuine sympathy or feeling but rather simulated understanding based on emotional cues.

AI engineers call this “artificial emotional intelligence” or affective computing. The technology’s goal is to make machines communicate in ways that feel more human — whether in healthcare chatbots, customer service, education, or therapy settings.

In simple terms, empathy AI doesn’t feel emotion. It detects and imitates emotional awareness so humans can interact more comfortably with digital systems.

What Is Its Purpose?

1. Improving Human–Machine Interaction

Empathy AI aims to make communication between people and technology more natural and less mechanical. Voice assistants like Amazon Alexa, Google Assistant and Microsoft’s Copilot are trained to adjust tone and language depending on user emotion (impatience, confusion, stress).

Advertisement

- ECHO SPOT – A sleek smart alarm clock with Alexa and big vibrant sound. Ready to help you wake up, wind down and so much…

- CUSTOMISABLE SMART CLOCK – See time, weather and song titles at a glance, control smart home devices and more. Personali…

- BIG VIBRANT SOUND – Enjoy rich sound with clear vocals and deep bass. Just ask Alexa to play music, podcasts and audiobo…

2. Emotional Support and Mental Health Assistance

Several UK mental health platforms — such as Wysa, Lumen and Ellie AI — use empathy AI to offer conversation-based therapy support.

The purpose is to fill the gap in human counsellor availability, providing round‑the‑clock help to those waiting for mental‑health services.

3. Customer and Patient Care

Empathy AI is increasingly used by banks, call centres, and healthcare providers.

For example:

- Lloyds Bank tested AI systems trained to sense frustration in callers’ voices and automatically escalate to a human agent.

- The NHS AI Lab has trialled systems that monitor patient feelings during virtual consultations to improve bedside “manner” in remote care.

4. Education and Training

Empathy AI is used to simulate patient or client behaviour during professional training. Medical students, for instance, can practise breaking bad news to an emotionally responsive digital patient before facing real situations.

How Empathy AI Works

- Emotion Detection: Uses natural‑language processing (NLP) and image or voice recognition to identify emotional cues. For example, a raised voice may indicate anger, or long pauses might signal anxiety.

- Context Analysis: Cross‑references emotional cues with topics and history of interaction (are you calling about finances, illness, or frustration with a product?).

- Response Generation: The AI tailors replies — tone, word choice, rhythm — to sound empathetic (“I understand this must be stressful”).

- Continuous Learning: Models refine responses as they observe user satisfaction or repeated engagement.

How Effective Is It?

Measured Success

According to the Cambridge Institute for Affective Computing (2025), empathy AI systems correctly identify human emotions between 65% and 80% of the time under controlled lab conditions.

However, success rates drop significantly in real‑world situations with accents, background noise, and multi‑layered emotions — sometimes below 50% accuracy.

The Alan Turing Institute’s behavioural AI report (2025) concluded:

“Empathy AI performs well at pattern recognition but poorly at nuance. It detects anger more reliably than sadness or subtle emotional states such as guilt or irony.”

Advertisement

- 23.8″ FULL HD DISPLAY – 1920 x 1080 resolution in 16:9 format with 100Hz refresh rate and IPS technology for vibrant col…

- SMOOTH VISUALS – The 100Hz refresh rate reduces flicker for seamless scrolling and clear motion visuals – perfect for wo…

- TÜV RHEINLAND 3-STAR + COMFORTVIEW PLUS – Built-in ComfortView Plus reduces harmful blue light without compromising colo…

Emotional Subtlety Problem

Emotions are contextual and culturally variable. An AI may recognise vocal tremor but not understand whether it reflects fear, excitement, or fatigue.

In the UK, humour and understatement complicate this further — sarcasm, irony and dry wit can confuse empathy algorithms trained on more direct American speech samples.

User Satisfaction

A 2024 Ofcom survey found that 58% of British users reported “positive or helpful” experiences when dealing with empathetic chatbots in healthcare or finance.

But 32% found responses “patronising or artificial,” noting they could tell the “kindness” was scripted.

Real‑World Examples in the UK

NHS and Healthcare

- Wysa (used by some NHS trusts) provides therapeutic conversation and mood tracking via text AI. Early studies published in the British Journal of Psychiatry Open (2024) found improvements in anxiety scores among 70% of users over 12 weeks — though the researchers noted that “human follow‑up remains essential.”

Customer Service and Retail

- British Airways piloted empathy AI in its help‑centre chatbot to defuse irate passenger interactions. Complaints de‑escalated faster, but overall satisfaction improved by only 10% — suggesting limited long‑term emotional resonance.

Education

- At University College London (UCL), “emotional tutoring” AI is being trialled to monitor student stress during remote learning and adapt workloads. Researchers there report improved engagement but note challenges in respecting privacy boundaries.

Can Empathy AI Ever Really Work Effectively?

Short‑Term Outlook (2025–2030)

Expect improved consistency but not genuine empathy. AI will get better at identifying speech tone, facial micro‑expressions and text sentiment.

Systems could exceed 85% accuracy in detecting basic emotions such as happiness, sadness and anger within the next five years.

However, detecting blended or masked emotions — e.g. polite frustration or quiet despair — will remain elusive because it requires contextual human understanding, not data correlation.

Advertisement

- CHOOSE SLUNSE: Break through the limits, starting from home! SLUNSE has been focusing on high-quality home fitness equip…

- 5-IN-1 FOLDING EXERCISE BIKE FOR HOME:Choose from different positions at your leisure: upright position for a classic ri…

- 20dB NEAR-SILENT RIDING AND 16-LEVEL MAGNETIC RESISTANCE:SLUSAE exercise bike uses a high-quality flywheel to reduce fri…

Long‑Term Outlook (beyond 2035)

The frontier research now focuses on integrative models combining physiological (heartbeat, sweat response), environmental (context) and linguistic data. These “multimodal empathy AIs” could theoretically approach 90–95% accuracy, but true human‑like empathy will still be an illusion — a high‑fidelity simulation rather than genuine feeling.

Dr Huma Shah, Associate Professor of Artificial Intelligence at Coventry University, notes:

“AI can approximate empathy in behaviour but not in consciousness. The difference is moral understanding — machines can acknowledge distress but cannot care about it.”

A cynical yet realistic view: empathy AI will function well enough for comfort and commerce, but never as a genuine emotional substitute.

Why It Matters

Empathy AI could soften the edges of automation. Machines that appear to “understand” emotional tone can reduce frustration and improve mental‑health access.

Still, the risk of emotional manipulation remains — corporations or governments could use AI empathy to placate rather than truly help users.

The Royal Society’s 2026 Ethics of AI report warns:

“Simulated empathy risks becoming performance empathy. Users may feel heard without being helped.”

Advertisement

- 【23.8-inch All‑in‑One PC with Core i5‑7300】Responsive everyday performance — 16GB RAM + 512GB SSD deliver fast boot, smo…

- 【Modern Connectivity & Fast Networking】Built‑in Wi‑Fi 6 and Bluetooth 5.3 ensure stable wireless connections; full I/O i…

- 【Space‑Saving, Ready‑to‑use All‑in‑One PC】Compact all‑in‑one form factor with included keyboard and mouse makes setup si…

How It Could Be Refined in the Future

To improve authenticity and trust, future empathy AI must:

- Use culturally adaptive models — trained on regional speech and behaviour (especially needed in Britain’s diverse society).

- Show transparent reasoning — explain why it inferred a mood or made a suggestion.

- Combine AI with human oversight — especially in mental health or medical contexts.

- Focus on ethical deployment — using empathy AI to support, not manipulate consumer behaviour.

A Real‑World Summary: Strengths and Shortcomings

| Feature | Current Capability (2025–26) | Future Potential | Limitation |

|---|---|---|---|

| Emotion detection | 65–80% accuracy | 90% (basic emotions) | Cultural confusion, sarcasm |

| Response quality | Helpful, polite | More nuanced | Still feels scripted |

| Healthcare application | Useful supplementary tool | Preventive mental‑health support | Must retain human supervision |

| Authentic empathy | Simulated | Improved imitation | Never genuine understanding |

References (UK and International)

- The Alan Turing Institute – Behavioural AI and Affective Computing Review, 2025

- British Journal of Psychiatry Open – Digital Cognitive Therapy with Wysa, 2024

- Ofcom – Public Perceptions of Conversational AI, 2024

- Royal Society – Ethics of Emotional AI, 2026

- Coventry University Centre for Computational Intelligence – Empathy AI Research Brief, 2025

Final Thought: The Illusion of Understanding

Empathy AI can recognise feelings, respond politely, and sometimes even comfort people — but it doesn’t feel alongside them. Its purpose is not to care, but to bridge communication gaps between humans and machines.

It will become more convincing, even charming. Yet, as many experts warn, the better empathy AI performs, the easier it is to forget that it isn’t truly human.

So, will empathy AI ever really work?

Yes — as imitation. But never as emotion.

Find Help and Support

We have created Professional High Quality Downloadable PDF’s at great prices specifically for Personal or Business use in the UK. Which include help and advice on understanding what Artificial Intelligence is all about and how it can improve your business. Find them here.