Artificial Intelligence is creeping into every corner of daily British life — from banking apps to medical diagnostics, job recruitment, and even home appliances. But not all AI is created equal.

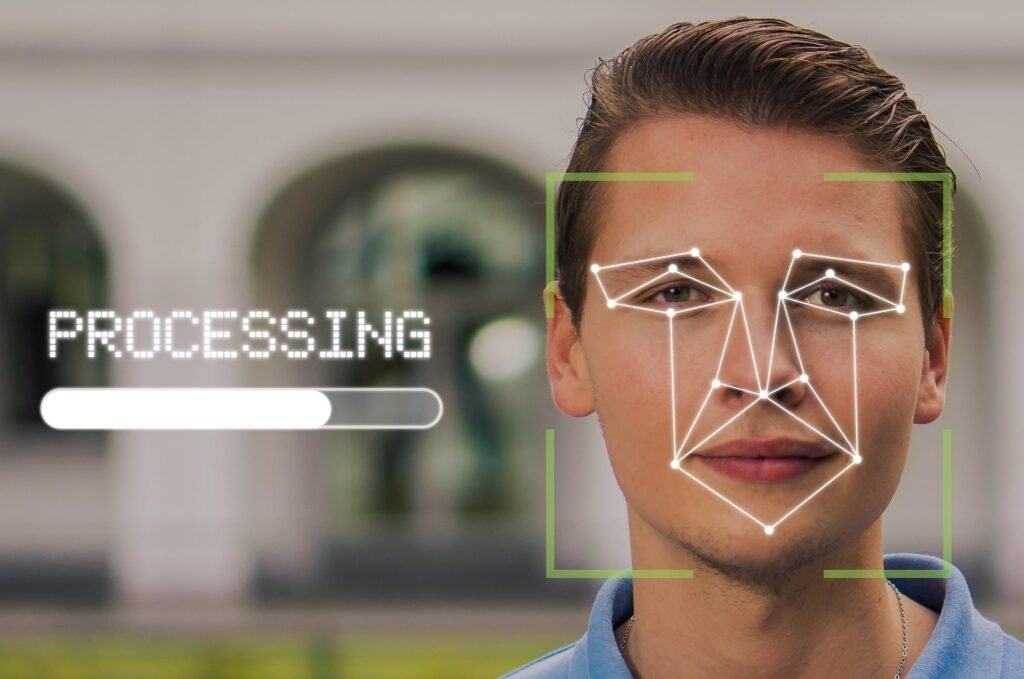

Of all these technologies, the most unreliable in everyday UK use is widely expected to be AI-driven facial recognition systems — particularly those applied in public surveillance, retail security, law enforcement, and airport checks.

Why? Because these systems rely on imperfect data, overconfident algorithms, and human institutions far too eager to claim they’re “accurate enough.”

The Unreliable Technology: AI Facial Recognition

A Technology Built on Bias

Facial recognition AI matches human faces captured by cameras against databases for identity verification or crime prevention.

But it struggles with diverse lighting, movement, camera quality — and especially demographic variety.

A recent Home Office review (2025) admitted that AI facial systems deployed in British high streets and airports showed up to 20% higher error rates for people with darker skin tones or non-average facial features.

See our PDF download: Your Complete AI Learning Journey

Overtrust and False Confidence

The cynical truth is that the technology works “well enough” for politicians to boast about modern policing, and for retailers to claim they’re improving safety. Yet, in practice, such AI systems frequently misidentify innocent people, produce false matches, and put trust in flawed automation ahead of human sense.

As Dr Rachel Coldman, AI ethics researcher at the Alan Turing Institute, puts it:

“Facial recognition doesn’t fail spectacularly — it fails quietly. People assume it’s right because it looks scientific.”

Why It’s So Unreliable in Daily British Settings

- Inconsistent Lighting and Weather: Outdoor use in the UK means rain, shadows, umbrellas, and poor visibility. These conditions drastically reduce accuracy.

- Low‑quality CCTV Infrastructure: Many local councils rely on outdated cameras not designed for facial mapping, distorting images before they even reach the AI.

- Data Bias: Training models mostly use datasets from the US or Asia, where demographic and facial structure profiles differ from the UK population mix.

- Human Laziness: Once installed, systems tend not to be regularly updated. What was 95% accurate on day one may degrade to 70% within a year.

The Three Scenarios: From Hope to Headache

1. Best‑Case Scenario: “The Helpful Machine”

How It Works Well

AI facial recognition becomes narrowly focused and responsibly deployed. UK authorities introduce strict calibration standards and bias checks for real‑time surveillance.

Systems are used only for border control, airport identity checks, and missing‑person cases, not for everyday policing or retail security.

Advertisement

- 23.8″ FULL HD DISPLAY – 1920 x 1080 resolution in 16:9 format with 100Hz refresh rate and IPS technology for vibrant col…

- SMOOTH VISUALS – The 100Hz refresh rate reduces flicker for seamless scrolling and clear motion visuals – perfect for wo…

- TÜV RHEINLAND 3-STAR + COMFORTVIEW PLUS – Built-in ComfortView Plus reduces harmful blue light without compromising colo…

Result

- Accuracy improves to over 98% for all demographics after national datasets are diversified.

- People accept camera usage because the benefits (faster passport queues, safer airports) visibly outweigh the risks.

- Oversight boards, including the Information Commissioner’s Office (ICO) and The Alan Turing Institute, enforce strict audit systems on algorithms.

Expert View

As Professor Alan Winfield, robotics ethicist at the University of the West of England, recently noted:

“When deployed transparently and with clear consent, AI surveillance can be accurate, boring and safe — which is exactly how it should be.”

Public Feeling

After a few initial scandals, facial recognition becomes as normalised as online banking — trusted, largely invisible, and tightly regulated.

2. Worst‑Case Scenario: “The Digital Panopticon”

The Dystopian Reality

Under immense pressure to appear tough on crime and migration, the government silently expands facial recognition systems across rail stations, schools, and public events.

AI vendors promote “next‑gen accuracy,” but reliability collapses under real‑world complexity.

False positives surge. Innocent people are flagged by machines and questioned by authorities. Privacy laws tighten retroactively rather than pre‑emptively. Courts overflow with appeals.

Consequences

- Minority groups and under‑30s are disproportionately targeted.

- Hackers exploit weak security in facial databases, leaking biometric data of thousands of people.

- “Detection rates” plummet, but contractors keep winning publicly funded upgrades.

Advertisement

- 16 Million Colors: This gu10 smart bulb (bulb shape size: MR16) provides millions of vivid colors plus cool & warm ambie…

- Adjustable Brightness & Color Temp: Brightness (0-400lm) and color temperature (2700-6500K) can be tailored precisely to…

- Convenient Intelligent Controls: You can control the gu10 led bulb via Alexa and Google Home to free your hands, and wit…

Economic and Ethical Cost

Public confidence in AI nosedives — and the technology, rather than deterring crime, becomes a symbol of state overreach.

Expert Warning

Silkie Carlo, director of Big Brother Watch, a UK digital rights charity, warns:

“What makes facial recognition dangerous isn’t that it’s powerful — it’s that it’s wrong in ways few people can detect, and nobody takes responsibility.”

In such a scenario, AI facial recognition doesn’t just fail — it becomes a national embarrassment, like a digital version of the Post Office Horizon scandal.

3. The Most Likely Scenario: “Middling Machine, Modest Mayhem”

Realistic Future

By 2030, AI surveillance and recognition remain half‑useful, half‑annoying. Police forces, supermarkets, and airports continue to use it, but the results are uneven.

- At airports, facial gates cut waiting times by 40%, but one in twenty passengers still need manual checks.

- Supermarkets use facial detection for VIP or theft alerts — but false flags irritate shoppers.

- Local councils run AI‑based litter enforcement cameras with laughably inconsistent results.

Outcome

The systems function just well enough that nobody scraps them, but not well enough to inspire trust.

Much like the UK’s early track‑and‑trace pandemic apps, people tolerate them while quietly doubting their worth.

Financial Perspective

By 2032, the UK has spent over £1.8 billion on maintaining and upgrading national recognition systems, but independent audits show little measurable improvement in safety outcomes beyond normal CCTV use.

Cultural Shift

People become desensitised to digital mistakes and simply shrug off false alerts or algorithmic judgement — a kind of “digital fatigue”.

As Dr Larissa Kennedy from Oxford Internet Institute commented:

“AI won’t need to be perfect to survive. It just needs to be convenient enough that humans stop noticing its flaws.”

This is the British compromise: underwhelming but tolerable — much like queuing or relying on Southern Rail.

Cynical Summary

| Scenario | Description | Public Outcome | Reliability Rating |

|---|---|---|---|

| Best‑Case | Targeted, audited use of facial recognition | Safer, faster, trusted | 8/10 |

| Most Likely | Mixed success with minor chaos | Accepted but distrusted | 5/10 |

| Worst‑Case | Nationwide misuse and bias | Distrust, protests, lawsuits | 2/10 |

Why Facial Recognition Tops the “Untrustworthy AI” List

- High stakes, low oversight: It affects real people in real time.

- Inherent bias and technical fragility: Even “99% accurate” can still mean thousands of wrongful matches daily in the UK population.

- Corporate spin: Companies oversell precision while disclaiming liability for misuse.

- Political temptation: Governments like shiny tech projects that look authoritative.

Facial recognition’s unreliability doesn’t come from bad coding — it comes from the human eagerness to replace discretion with data certainty that doesn’t actually exist.

Key References (UK and European Sources)

- Home Office – Facial Recognition in Policing: Accuracy and Ethics Review, 2025

- Big Brother Watch – Smile for the Camera? The State of Facial Recognition in the UK, 2024

- Alan Turing Institute – Bias and Accountability in Automated Surveillance Report, 2025

- Oxford Internet Institute – Trust and Technology in Everyday Life, 2025

- University College London – AI Systems Reliability Index, 2025

- European Union Agency for Fundamental Rights – Facial Recognition and Rights in Europe, 2024

Final Thought

Facial recognition AI might eventually get smarter, but for now it remains Britain’s most quietly unreliable technology — accurate enough to impress, flawed enough to harm, and public enough for everyone to notice when it goes wrong.

It exemplifies a new truth about AI in everyday life:

“The problem isn’t that machines are too clever — it’s that humans keep trusting them when they’re only just clever enough to get by.”

Find Help and Support

We have created Professional High Quality Downloadable PDF’s at great prices specifically for Personal or Business use in the UK. Which include help and advice on understanding what Artificial Intelligence is all about and how it can improve your business. Find them here.