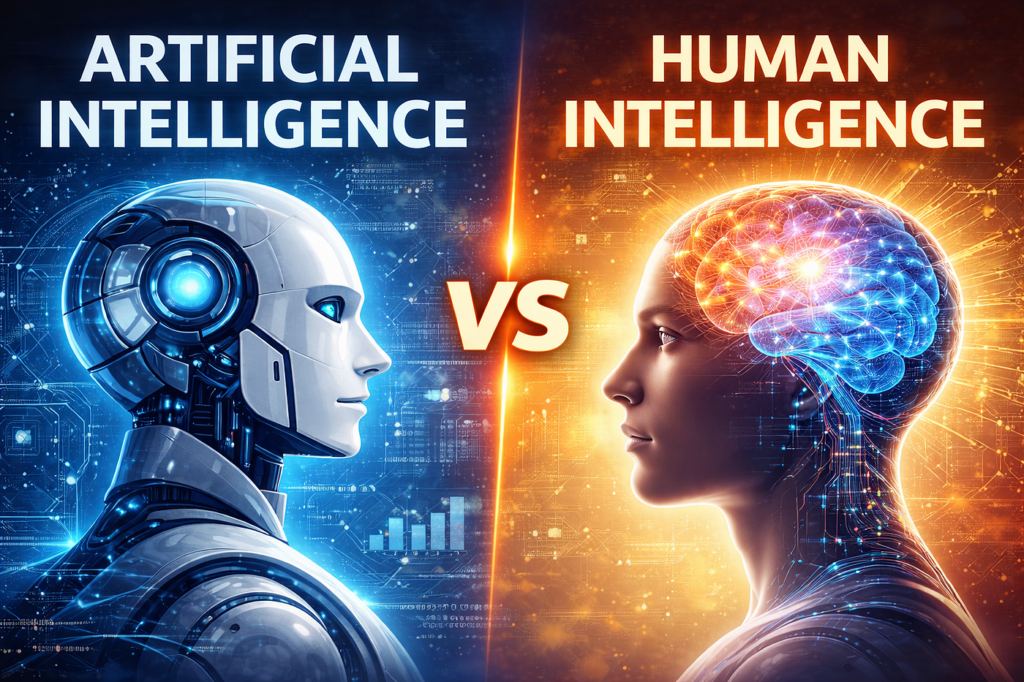

Artificial Intelligence has become part of everyday life in the UK — from retail to the NHS — but public opinion isn’t purely enthusiastic. AI now provokes genuine fear and alienation, not just fascination.

According to a 2025 survey by the Tony Blair Institute for Global Change, more than half of UK adults view AI primarily as a risk, not an opportunity. This anxiety centres on technologies that feel intrusive, unaccountable, or beyond human control.

Below is a clear breakdown of the AI systems that worry Britons the most, the reasons behind those fears, and the real‑world consequences already visible in society.

🧠 1️⃣ Deepfakes and Synthetic Media

Why They Frighten People

Deepfakes – AI‑generated images, videos or voices designed to mimic real people – have become the poster child for AI misuse. They blur the boundary between truth and fabrication.

- The Independent (2026) reported a sharp rise in searches for “deepfake scam” and “AI fake video”, with public trust in online content “collapsing faster than platforms can react.”

- Celebrities and politicians in the UK (including public figures and influencers) have already had manipulated footage spread across social media.

See our PDF download: Your Complete AI Learning Journey

Real‑World Effects

- Misinformation: During elections and news events, AI fabrication can distort what the public believes. The Electoral Commission (2025) warned that deepfakes could “damage the democratic process” if voters can’t trust video evidence.

- Personal Harm: Women and teenagers are disproportionately targeted in explicit deepfakes, turning AI into a tool of harassment (Home Office, 2025).

- Erosion of Trust: The cynical outcome is a permanent suspicion of digital evidence — people begin to doubt everything, even what’s real.

💬 “We’ve entered the era where seeing is no longer believing.” – The Guardian, Jan 2026.

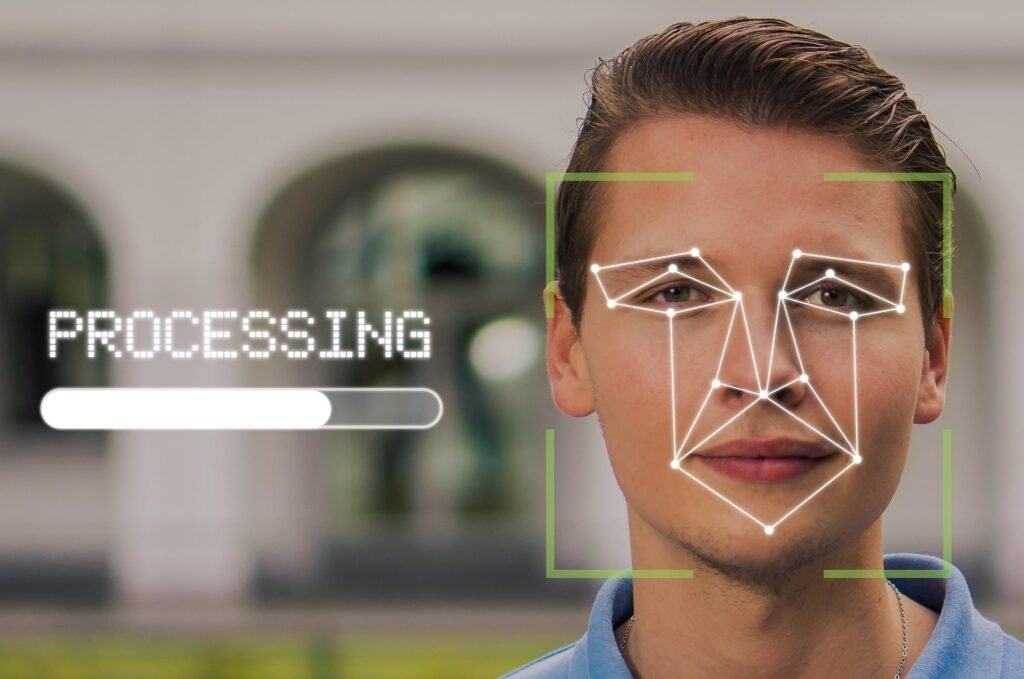

🧍♀️ 2️⃣ Facial Recognition and Live Surveillance

Why It Feels Alienating

Facial recognition claims to improve safety but often invades privacy. Retail chains, police forces, and councils are quietly installing camera systems linked to AI that track faces in real time.

- South Wales Police and Metropolitan Police have used live facial recognition since 2023, scanning crowds without explicit consent.

- Civil rights groups (Big Brother Watch, 2025) argue that the technology creates a “nation of watched citizens.”

Public Concerns

- Misidentification: Algorithmic bias has led to wrongful matches — especially for women and ethnic minorities.

- Loss of Anonymity: People report feeling constantly under observation in high‑street areas and train stations.

- Mission Creep: Once normalised in security, the same systems are sold to retailers for customer profiling.

Real‑World Effect

A 2025 YouGov poll found 62% of people oppose routine public‑space facial recognition. The technology has become a symbol of state and corporate intrusion, alienating people who feel powerless over their own digital identity.

📷 “You can’t opt out of being spotted.” – Privacy International, 2025.

Advertisement

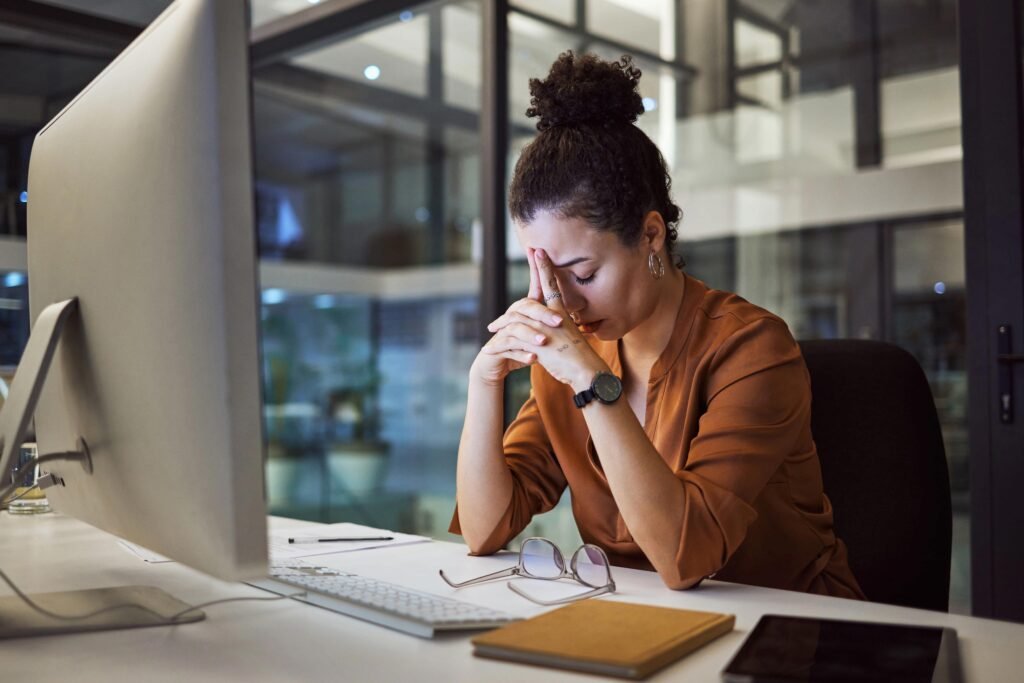

🏦 3️⃣ Workplace and Productivity Surveillance

Why Workers Fear It

AI monitoring technologies are spreading beyond warehouses into offices, tracking keystrokes, emails, and even facial expressions through webcams.

- Amazon UK, BT, and large law firms use algorithmic productivity scoring.

- The Trades Union Congress (TUC) warned in 2025 that 60% of British workers believe AI will be used to “monitor or control them” rather than help them.

Real‑World Impacts

- Loss of Autonomy: Employees describe feeling constantly observed and anxious.

- Unfair Disciplinary Actions: Algorithms flag “under‑performers” using flawed metrics.

- Mental Health Strain: Continuous digital oversight erodes trust between staff and management, leading some unions to label it “digital Taylorism.”

🧾 Cynical truth: what employers call “AI efficiency tools” often feel like digital micromanagers with no empathy.

🕵️♂️ 4️⃣ Predictive Policing and Data Profiling

Why It Causes Anxiety

Predictive policing systems use AI to forecast where crimes might occur or who could commit them. Police forces in parts of London, Kent, and Manchester have tested such tools, often built from old arrest data.

- Studies by the Royal United Services Institute (RUSI) found bias baked into historic datasets, potentially over‑targeting poorer or minority communities.

- Critics argue such systems risk turning probabilities into prejudice.

Effects on Public Trust

- Growing belief that AI policing reinforces existing inequalities rather than preventing crime.

- Chilling effect in certain neighbourhoods where residents feel over‑monitored.

- Calls for transparency: the Information Commissioner’s Office (ICO) demanded clearer guidelines on datasets used.

⚖️ The alienation here is social as well as technological — people feel judged by an algorithm they have never met.

💬 5️⃣ Generative Chatbots and “Talking Machines”

Why They Unsettle People

Tools like ChatGPT and Google Gemini, capable of writing, reasoning, and conversing fluently, blur the line between human and machine communication.

Many Britons express discomfort at digital “companions” that mimic empathy but lack real emotion.

According to the Ipsos “What the UK Thinks About AI” survey (2026):

- 59% see AI as a risk to UK society,

- And 45% describe generative chatbots as “unnerving” or “too human.”

Advertisement

- ECHO POP – This compact Bluetooth smart speaker with Alexa features a full sound that’s great for bedrooms and small spa…

- CONTROL MUSIC WITH YOUR VOICE – Ask Alexa to play music, audiobooks and podcasts from your favourite providers like Amaz…

- MAKE ANY SPACE A SMART SPACE – Use your voice or the Alexa app to easily control your compatible smart home devices like…

Real‑World Social Effects

- Erosion of human interaction: Overreliance on chatbots (for customer service or mental‑health advice) reduces contact with actual people.

- Trust issues: AI can sound authoritative even when wrong — creating false assumptions and misplaced faith.

- Identity blur: Teenagers and isolated adults sometimes treat chatbots as friends, prompting psychologists to warn of “synthetic companionship replacing real relationships”.

🗣️ “It’s empathy without the effort — convenient, but hollow.” – Clinical Psychologist quoted in The Telegraph, 2026.

🧬 6️⃣ AI in Healthcare Decisions

Why It Feels Threatening

When AI helps diagnose or prioritise NHS patients, most people welcome the efficiency. But when machines are seen as making life‑and‑death choices, discomfort grows.

- NHS trusts in Manchester and Birmingham are trialling AI triage systems to rank waiting lists and allocate surgery slots.

- Concerns persist over fairness, accountability, and data security — particularly after private‑sector involvement controversies like Google DeepMind’s handling of patient data.

Effects

- Unease among patients: Fear that “the computer decides” without compassion.

- Professional resistance: Some clinicians worry automation will erode doctor‑patient relationships.

- Policy backlash: The Care Quality Commission (CQC, 2025) has called for “human‑in‑the‑loop” guarantees in NHS AI tools to preserve trust.

🏥 Efficiency without empathy risks turning care into calculation.

📱 7️⃣ Social‑Media Algorithms and Emotional Manipulation

The Everyday AI That Shapes Everything

Recommendation engines on TikTok, YouTube, and Instagram — driven by machine learning — may seem harmless entertainment, but users increasingly recognise how manipulative they are.

- Algorithms feed teenagers content that maximises attention, not wellbeing.

- Journalistic investigations (BBC Panorama, 2025) showed teens being drawn to harmful content in minutes due to engagement‑optimising AI.

Impact on British Society

- Addiction & Anxiety: Teen mental‑health referrals have risen sharply; NHS clinicians link part of this to algorithmic influence.

- Political Polarisation: Filter bubbles reinforce tribal thinking across age groups.

- Loss of Agency: People no longer choose content — algorithms choose it for them.

🧠 AI here doesn’t frighten because it’s futuristic; it frightens because it already runs our attention silently.

🧩 Why These Technologies Cause Fear

| Root Cause | Description | Public Response |

|---|---|---|

| Loss of Control | Decisions made invisibly by algorithms | Calls for tighter regulation (DSIT AI Safety Summit, 2025) |

| Job and Identity Threats | Machines performing “human” roles | Anxiety among workers and creatives |

| Privacy Invasion | Massive data collection without consent | 78% want opt‑out options (ICO survey, 2025) |

| Ethical Ambiguity | Lack of oversight or appeal process | Growing distrust of both government and Big Tech |

| Social Alienation | Screens replacing community | Demand for balance between tech and human agency |

🇬🇧 The Broader Effects on UK Society

1. Public Distrust and Political Pressure

AI fear is shaping regulation. The AI Safety Institute (London, 2025) was created largely to ease public alarm after high‑profile reports of misuse. Politicians are under pressure to show they can “keep AI under control”.

2. Workplace Alienation

As automation spreads, especially in banking and administration, employees feel replaceable and monitored — leading to resentment and declining morale.

3. Mental‑Health Strain

Constant exposure to algorithmic content fuels anxiety and self‑comparison among young people. Mental‑health charities such as Mind UK warn of an “AI attention crisis.”

4. Digital Inequality

Affluent households adopt beneficial AI tools early, while others face algorithmic bias in credit scoring and hiring. The gap between AI users and AI victims subtly widens.

Advertisement

⚖️ Managing Fear — and Why It Matters

If distrust grows unchecked, the UK risks a public backlash that stalls innovation. The Centre for Long‑Term Resilience (2025) found 78% of Britons want stronger AI regulation — not just to curb risks, but to restore faith.

People aren’t anti‑technology; they’re anti being experimented on without consent.

Cynically, many companies only act when fear starts to hurt profits — for instance, retailers adding “AI privacy guarantees” purely as marketing tools once customers started to complain.

🚨 In Summary

The AI technologies that scare and alienate people most in the UK today are those that:

- See too much (facial recognition)

- Fake too well (deepfakes)

- Replace too many (workplace monitoring)

- Decide too mechanically (healthcare algorithms)

- Manipulate too invisibly (social‑media recommendation engines)

Their combined effect is a steady erosion of trust — in media, in employers, in government, and even in one another.

As one cynical analyst told The Financial Times (2025):

“AI isn’t making people fear machines — it’s making them fear how other humans will use machines.”

Opinion

The big companies and the governments globally are selling us the dream and ignoring reality for their own benefit. If we have no say it how it’s used and what data we can allow or deny then we become part of the machine. There are many advantages and disadvantages of AI technology, but we should far more say as to how we feel about how it is used on our behalf.

AI is far from perfect and when it make mistakes who pays the price, you can’t take AI to court!

Find Help and Support

We have created Professional High Quality Downloadable PDF’s at great prices specifically for Personal or Business use in the UK. Which include help and advice on understanding what Artificial Intelligence is all about and how it can improve your business. Find them here.