AI might be the buzzword on everyone’s lips, but not all artificial intelligence is created equal — and certainly not all of it works properly outside a glossy demo or corporate pitch. In everyday use across the UK, certain AI tools make more mistakes than they fix, leaving consumers frustrated, wrongly flagged, or even discriminated against.

The most unreliable technologies are those that pretend to understand context, emotion, and nuance — things that humans learn through experience, not code. Below is a frank analysis of which types of AI are most error‑prone, why they go wrong, and how this affects ordinary people in Britain today.

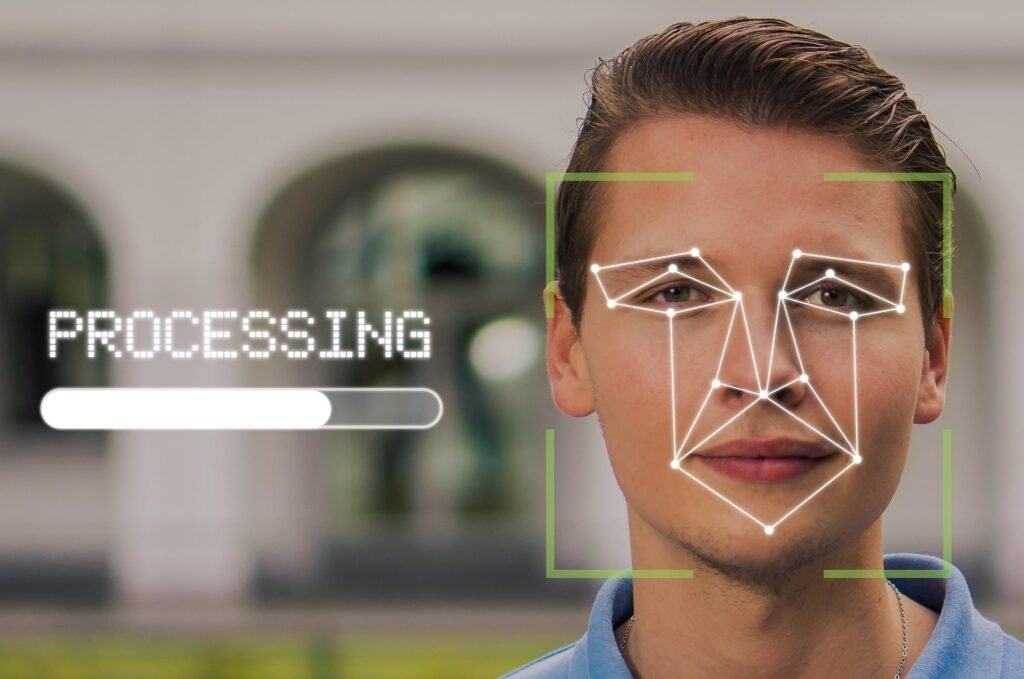

🤖 1️⃣ Facial Recognition and Surveillance AI

Why It’s Everywhere — and Why It’s Usually Wrong

Facial recognition is being sold as the backbone of “smart” security in shops, housing estates, and airports. But in the real world, it’s unreliable, biased, and legally murky.

Real‑World Failures

- The Co‑op and Facewatch Controversy: Several Co‑op supermarkets in southern England used AI facial‑recognition software by Facewatch to deter shoplifters. Big Brother Watch (2024) revealed dozens of wrongful matches — innocent shoppers falsely banned because an algorithm thought they “looked like” someone else.

- South Wales Police trial (2020–2023): Facial‑recognition tech wrongly identified people in public areas nine times out of ten in early tests (Information Commissioner’s Office ruling, 2023). Updates have improved accuracy slightly, but misidentifications still happen.

Advertisement

- ECHO POP – This compact Bluetooth smart speaker with Alexa features a full sound that’s great for bedrooms and small spa…

- CONTROL MUSIC WITH YOUR VOICE – Ask Alexa to play music, audiobooks and podcasts from your favourite providers like Amaz…

- MAKE ANY SPACE A SMART SPACE – Use your voice or the Alexa app to easily control your compatible smart home devices like…

The Truth

Facial recognition works best on stock photos in good lighting. It fails miserably with masks, poor angles, dark skin tones, or crowded streets — i.e., the real world.

Yet retailers keep installing it because it looks impressive on a PowerPoint slide and lets them say they’re “doing something” about crime while laying off security staff.

Why It’s Dangerous

- Low reliability + high confidence = wrongful suspicion.

- Public accountability almost nonexistent; firms hide behind “proprietary algorithms”.

- Wastes police time and damages trust between businesses and communities.

Verdict: Facial recognition leads the pack for being confidently, consistently wrong.

🗣️ 2️⃣ Voice Assistants and Speech Recognition

“Sorry, I Didn’t Catch That…”

Voice AI like Amazon Alexa, Google Assistant, and Apple Siri has become part of UK domestic life, but the technology still fails at basic understanding.

Everyday Glitches

- Mishears requests — “turn lights off” becomes “buy more bulbs”.

- Can’t understand regional British accents: Scots, Scousers, and Brummies still baffle the machines.

- Often triggers by accident — especially during TV adverts or casual conversation.

According to Which? (2025), one in four UK users reports daily misfires or wrong responses from smart speakers.

The Cynical Bit

Voice AI exists less to serve you and more to harvest data. Every “mistaken activation” is one more snippet of speech used to train future models.

Britons complain, laugh, and keep buying them anyway — meaning the devices get away with being charmingly incompetent as long as they’re convenient.

Verdict: Still better at spying than listening.

🧠 3️⃣ Generative AI Chatbots

The Overconfident Liars

Generative AIs such as ChatGPT, Claude, and Gemini are useful for writing emails, essays, or code — but they’re chronic hallucinators, often inventing facts with the swagger of authority.

Real Examples in Daily Use

- UK university students caught out when AI‑written citations referred to non‑existent academic papers (Times Higher Education, 2025).

- Companies using chatbots for customer service have delivered incorrect policy information or false account details — errors later costing firms tens of thousands in compensation (reported by BBC News Technology, May 2026).

- Schoolchildren prompted to use AI homework tools got elaborate but wrong science explanations with made‑up references.

Why It Happens

Generative models don’t know anything; they predict what words look good together. They’re expert bluffers, not truth‑tellers.

When connected to business websites, that overconfidence becomes a liability — one British insurer’s chatbot even contradicted its own terms and conditions (Which?, 2025).

Why It’s So Popular Anyway

Free labour. A chatbot can replace customer‑service reps and copywriters temporarily, so companies accept 80% accuracy to save 100% of wages.

Verdict: The world’s most plausible liar — and too cheap to ignore.

🧬 4️⃣ Emotional or “Empathy” AI

Algorithms That Pretend to Feel

This is the latest hype wave: AI that analyses tone, body language, or facial expression to detect emotion. The idea is seductive — marketing systems that know when you’re upset, call‑centre bots that “sense frustration”, even healthcare apps identifying stress.

Real‑World Reliability

- Trials by UK universities (Bath & Nottingham, 2025) found emotion‑tracking algorithms flagged anger in 42% of neutral expressions — apparently, being tired or having a beard counts as rage.

- Companies still buy it, bolstered by HR software promising to “gauge employee mood” through webcams.

Advertisement

- Clear Two-Way Audio: Our surveillance system comes equipped with a built-in speaker and microphone for easy communicatio…

- Upgraded AI Motion Detection: With AI human/vehicle detection enabled on all 8 analog channels, the system greatly reduc…

- Colorful Night Vision: Choose from three night vision modes – including smart light, white light, and IR night vision. I…

Why It’s Flawed

- Human emotion is contextual; algorithms can’t read sarcasm, culture, or hormones.

- The concept is rooted in pseudo‑scientific assumptions — that expressions map neatly to feelings.

- It normalises surveillance of workers and students “for wellbeing” when it’s really for productivity.

The Truth

“Empathy AI” gives bosses the illusion of understanding staff without actually listening to them. It’s cheaper than hiring managers who care, so expect more of it.

Verdict: Fake feelings, real creepiness.

🧮 5️⃣ Predictive Policing and Risk Scoring

AI Meets Human Bias, Doubles It

Several UK police forces and councils are experimenting with “predictive analytics” for crime prevention and welfare fraud detection. These systems promise to spot patterns of risk. In reality? They automate the prejudices already baked into historical data.

UK Examples

- Durham Constabulary’s “HART” algorithm (tested 2016–2023) misclassified suspects, often rating minority offenders as high‑risk and well‑off white offenders as low‑risk. It was quietly retired after public concern.

- Local authority benefit‑fraud prediction pilots (2024) wrongly flagged hundreds of legitimate claimants, overwhelmingly in low‑income areas (Bureau of Investigative Journalism, 2025).

Advertisement

- SWITCH TO SMART LIGHTING – Start enjoying the benefits of smart lighting straight away: simply screw in your new LED lig…

- CREATE AMBIANCE WITH COLOR – Find the right ambient lighting for any mood with millions of colors and a packed library o…

- SMOOTH DIMMING – Easily dim your energy efficient bulb from full brightness all the way down to 2% using the Hue app to …

Why It Happens

AI models learn from historical arrests and fraud claims — meaning they reproduce the same biases. “Optimisation” then just means making the bias faster and paperless.

Public Impact

Ordinary Britons risk being labelled suspicious by machines that never have to justify themselves. Meanwhile, officials shield themselves behind “automated decision‑making systems” — avoiding accountability.

Verdict: The algorithmic judge: quick, cheap, and quietly unjust.

💬 Why These Technologies Stay in Use Despite Failing

| Reason | Explanation (Cynical Translation) |

|---|---|

| Cost saving | Wrong but cheap beats accurate but staffed. |

| Data hunger | Every mistake makes the next version smarter — you’re free training material. |

| Public relations | “AI‑powered” sounds modern; failure stories vanish quietly. |

| Regulatory lag | UK law still catching up — enforcement weak, fines minor. |

⚠️ The Everyday Effect on UK Life

| Domain | Likely AI Failing | Real‑World Consequence |

|---|---|---|

| Shopping & Security | Misidentification by facial recognition | Innocent people followed or banned |

| Customer Service | Chatbots misstate policies | Wrong bills, denied refunds |

| Education | AI homework help hallucinating facts | Students accused of cheating or copying |

| Healthcare | Emotion‑detection & diagnostic bias | Potential misdiagnosis or mistrust |

| Policing / Councils | Predictive analytics | Targeting vulnerable neighbourhoods |

🔍 Summary

The most unreliable AIs in the UK today — and likely for years to come — are those pretending to be human:

- Facial recognition still can’t tell people apart reliably.

- Voice assistants still mishear half the country.

- Chatbots still make up their own facts.

- “Emotion AI” still can’t read feelings — only faces.

- Predictive policing still confuses policing history with truth.

They’ll all get rolled out anyway because they’re profitable, and because a shiny word like innovation sounds more appealing than cheap automation.

For ordinary Britons, the coming decade means living alongside machines that are confident, persistent — and persistently wrong. When they fail, the companies will say:

“The system learns and improves.”

But until it learns to listen, reason, or care, the most human skill these systems have is making mistakes — and blaming someone else for them. Which is not good enough but what choice do we have, we live with the consequences not the AI.

Find Help and Support

We have created Professional High Quality Downloadable PDF’s at great prices specifically for Personal or Business use in the UK. Which include help and advice on understanding what Artificial Intelligence is all about and how it can improve your business. Find them here.