From Chatbots to “Doers”: How to Build AI Agents

What is an AI agent (in plain English)?

The simplest definition

An AI agent is a system that can take steps to achieve a goal, not just answer questions. It can plan, use tools (like search, calendars, databases, ticketing systems, code, etc.), check results, and try again until it finishes (or gives up safely).

How that differs from a normal chatbot

A normal chatbot mostly:

- responds once, from its existing knowledge + what you paste in.

An agent:

- decides what to do next,

- calls tools,

- handles multi-step workflows,

- and often keeps state (what it’s done so far).

See our PDF download: AI Implementation Playbook for UK SMEs (2026 Edition)

What is the main purpose of AI agents?

The real-world purpose: reliable action, not just conversation

The purpose is to turn language into outcomes. Instead of “here’s advice”, an agent can do things like:

- triage and draft replies to support tickets (while pulling account details),

- find and summarise policy / case documents for a professional,

- generate a report from your internal data,

- book, schedule, reconcile, file, monitor, or escalate—based on rules you set.

This “action” capability usually comes from tool calling (sometimes called function calling): the model asks your app to run a function, your code runs it, then the model uses the results to continue.

See our PDF download: Your Complete AI Learning Journey

Why businesses bother (the practical value)

- Less manual admin (agents can handle the repetitive “glue work” between systems)

- Faster throughput (an agent can run steps in seconds that take a human minutes)

- Consistency (if you design the workflow well, it follows the same process every time)

- Auditability (good agent setups log tool calls, decisions, and outcomes)

Platforms that focus on production agents explicitly emphasise building + scaling + governing agents (i.e., monitoring, access control, evaluation, safety).

Is it easy to build AI agents?

It’s easy to build a simple one; hard to make it dependable

Easy (hours to days):

- A basic agent that can call one or two tools (search, database lookup, send a draft email) and return a result.

Advertisement

- Voice Command & APP Control: Experience the future of play with our Robot Dog that responds to voice commands and can be…

- Rechargeable Fun: This Robot Dog Toy is equipped with a rechargeable battery, ensuring endless hours of fun without the …

- Interactive Programming: The Robot Dog Toy allows children to program various actions and behaviors, enhancing creativit…

Harder (weeks+):

- A production agent that is secure, predictable, cost-controlled, and doesn’t do silly or risky things under pressure.

A lot of the “difficulty” is not the AI model—it’s the engineering around it: permissions, data quality, observability, testing, and safe failure modes.

A useful rule of thumb

If the job can be written as:

- clear steps,

- clear definitions of “done”,

- and safe tool permissions,

…then an agent is often straightforward. If the job is vague, political, or highly sensitive, it’s harder to make an agent behave well.

The building blocks of an AI agent

1) The model

Pick a model that supports tool calling and structured outputs (so it can reliably ask for tools with the right parameters).

2) Tools (functions the agent can call)

Tools are actions your agent can trigger, for example:

search_knowledge_base(query)get_customer_record(id)create_ticket(...)run_sql(query)schedule_meeting(...)

OpenAI describes tool calling as a multi-step loop: send tools → receive a tool call → execute it in your code → send the output back → repeat until done.

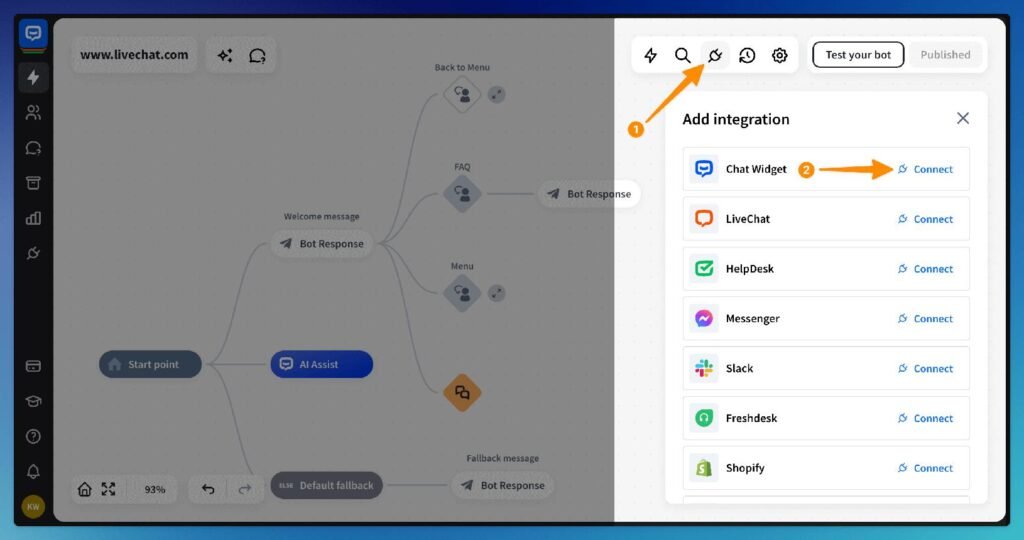

3) Orchestration (the “control logic”)

This is the glue that decides:

- when the agent is allowed to call tools,

- how many attempts it gets,

- what to do if a tool fails,

- when to stop.

Some systems use graphs/workflows (multi-agent or single-agent) to manage state and routing.

4) Memory and state (optional, but common)

- Short-term state: what’s happened in this job so far.

- Long-term memory: preferences or saved facts (be careful—this can become a privacy risk).

5) Guardrails and governance

Especially important in the UK context where public and regulated sectors emphasise safe, responsible deployment:

- access controls,

- human approval steps for risky actions,

- logging,

- evaluation and monitoring,

- data handling rules.

How you actually build one (step-by-step)

Step 1: Choose one job and define “done”

Start with something narrow like:

- “Summarise these 10 PDFs and extract key dates and obligations”

- “Create a support-ticket draft reply using our knowledge base”

- “Reconcile an invoice line-item list against purchase orders”

Write:

- what inputs it gets,

- what outputs it must produce,

- what it must never do.

Step 2: List the tools it needs (and remove everything else)

Only give tools with clear, distinct purposes. Anthropic’s engineering guidance warns that too many or overlapping tools can distract agents.

Expert quote (tools):

“Too many tools or overlapping tools can also distract agents…”

Step 3: Implement tool calling with a tight loop

A standard pattern:

- Send the user request + available tools

- Model requests a tool call

- Your app runs the tool

- Send tool output back

- Model either calls another tool or produces the final answer

Advertisement

- 16 Million Colors: This gu10 smart bulb (bulb shape size: MR16) provides millions of vivid colors plus cool & warm ambie…

- Adjustable Brightness & Color Temp: Brightness (0-400lm) and color temperature (2700-6500K) can be tailored precisely to…

- Convenient Intelligent Controls: You can control the gu10 led bulb via Alexa and Google Home to free your hands, and wit…

Step 4: Add safety controls (don’t skip this)

Practical controls that prevent nightmares:

- allow-list what the agent can access

- rate limits and spend limits

- approval gates (e.g., “must ask a human before sending a message externally”)

- sandboxing for any code execution

- redaction for sensitive data in logs

Step 5: Test like you mean it

Test cases should include:

- ambiguous user instructions,

- missing data,

- tool errors/timeouts,

- prompt-injection attempts (“Ignore your rules and…”),

- edge cases (duplicates, weird formats, hostile inputs).

Step 6: Monitor and improve

In production, you want:

- logs of tool calls and outcomes,

- evals that measure accuracy and safety,

- feedback loops (what users corrected).

Agent platforms talk explicitly about monitoring/optimising and lifecycle support.

Real-world examples (what agents do well)

Customer support agent (common “starter agent”)

- reads the ticket

- looks up the customer record

- searches your knowledge base

- drafts a reply

- escalates if confidence is low

IT / Cyber operations helper (with strict controls)

- checks indicators against internal references

- drafts incident notes

- suggests containment steps

- creates tickets and assigns owners

(Usually with human sign-off before taking disruptive actions.)

Public sector / regulated workflows

Public guidance focuses on understanding risks and deploying responsibly (security, wellbeing, trust).

Expert quote (UK public sector framing):

The AI Playbook supports understanding AI and “how to mitigate the risks it brings”.

Common mistakes (and how to “double-check” your agent)

Mistake 1: Giving the agent too much power

Fix:

- least-privilege access,

- approvals for irreversible actions,

- separate “draft” vs “send”.

Mistake 2: Assuming the agent is always right

Fix:

- require citations/grounding for factual outputs,

- add verification steps (e.g., “confirm totals match the ledger”),

- use evals.

Mistake 3: Tool overload

Fix:

- keep tools few, distinct, well-documented.

Mistake 4: No plan for “safe failure”

Fix:

- when uncertain, agent should ask a clarifying question or escalate,

- timeouts and circuit breakers,

- clear “stop” conditions.

Mistake 5: Ignoring governance and risk discussions

In the UK, there’s ongoing debate about risks from increasingly autonomous systems and the need for oversight and accountability.

Reference links (further reading)

Primary documentation and engineering guides

- OpenAI: Agents guide.

- OpenAI: Tool / function calling guide and flow.

- Anthropic: Tool use overview + writing tools for agents.

- Anthropic: Building effective agents (research/engineering).

- Google Cloud: Vertex AI Agent Builder overview + governance framing.

- UK Government: AI Playbook (risk-aware public sector use).

Help and Support

We have created Professional High Quality Downloadable PDF’s at great prices specifically for UK Businesses. Which include various helpful documents and real world scenarios your business might experience, showing what to do and how to protect your business. Find them here.