Yes — there is strong evidence that Artificial Intelligence (AI) systems will use considerably less energy in the coming decade than they do today.

At the moment, AI training and operation consume vast amounts of electricity, both from data centres and from cooling systems. But improvements in model design, hardware, and renewable‑energy infrastructure are expected to make future AI far more efficient — especially in countries like the UK that are already investing in energy‑optimised technology and green computing initiatives.

However, while individual AI systems will become more efficient, total energy use may still rise because demand for AI services is growing explosively — from chatbots and logistics software to medical research and driverless vehicles.

Why AI Uses So Much Energy Today

The Data Centre Problem

AI models learn from enormous datasets that require billions (and sometimes trillions) of computations.

Data centres powering these models use large numbers of graphics processing units (GPUs) and chips, which consume electricity both for computation and cooling.

Globally, AI computing already rivals the energy usage of entire developed nations. According to research from Massachusetts Institute of Technology (MIT), by 2026 AI could consume the same electricity as the entire country of Japan or Russia.

Inefficient Model Design

Modern large language models (LLMs) — like ChatGPT or Google’s Gemini — have been designed for maximum versatility, not energy efficiency. They process far more data and parameters than necessary for most tasks. This “brute‑force” approach drives up energy use drastically.

What Will Make AI More Energy Efficient in the Future

Smaller, Specialised Models

A major trend is the move away from giant “general purpose” models towards small, task‑specific AIs.

These use fewer parameters, need less data to train, and perform single functions extremely well.

According to UCL’s 2025 research published through UNESCO, this shift could improve energy efficiency by up to 90% for many tasks such as translation, summarisation or data sorting.

In other words, instead of one big AI doing everything badly, there will be many smaller AIs doing specific things efficiently.

Quantisation and Model Compression

Techniques like quantisation and pruning reduce the precision of calculations inside AI models without reducing accuracy.

By rounding down small decimal values and removing redundant network connections, models become leaner and faster to run.

The University College London (UCL) study found that such optimisations cut power needs by up to 44% during inference — the stage where an AI answers queries.

Next‑Generation Chips

AI processing hardware is evolving quickly.

Companies such as ARM UK, Graphcore (Bristol), and NVIDIA’s UK data teams are creating chips designed for neural‑network acceleration — meaning more computations per watt of power.

Graphcore estimates that its latest “Bow IPU” chip delivers up to 16 times more performance for the same energy use compared with standard GPUs.

AI Running on Renewable Power

The UK’s grid is rapidly decarbonising: renewables accounted for nearly 50% of electricity generation in 2024, according to National Grid ESO.

Future AI systems — particularly government and academic installations — will increasingly run on solar, wind and green‑hydrogen power.

That means even if energy use stays high, carbon impact will fall sharply.

Cooling and Infrastructure Innovation

New cooling technologies such as liquid immersion systems are reducing the power needed to keep data centres from overheating.

The UK’s Energy Systems Catapult predicts such innovations could lower cooling demands by 30–40% across national computing facilities by 2030.

How This Will Work in the UK

Government and Industrial Strategy

The UK is one of the first countries to formally include AI energy efficiency targets in its digital infrastructure planning.

The 2025 UK AI Energy Council, established jointly by the Department for Energy Security and Net Zero (DESNZ) and the Department for Science, Innovation and Technology (DSIT), aims to ensure all national AI data projects meet sustainability standards.

The government’s plan includes:

- Consolidating public‑sector computing into shared “green data‑centres”.

- Supporting innovation through initiatives like UK Green Compute Mission (2026–2035).

- Partnering with firms like Equinix, Microsoft Azure UK, and Amazon Web Services London to commit to 100% renewable AI operations by 2030.

These schemes have been introduced in response to growing public concern — and to maintain the UK’s climate commitments under the Net Zero by 2050 strategy.

Advertisement

- 23.8″ FULL HD DISPLAY – 1920 x 1080 resolution in 16:9 format with 100Hz refresh rate and IPS technology for vibrant col…

- SMOOTH VISUALS – The 100Hz refresh rate reduces flicker for seamless scrolling and clear motion visuals – perfect for wo…

- TÜV RHEINLAND 3-STAR + COMFORTVIEW PLUS – Built-in ComfortView Plus reduces harmful blue light without compromising colo…

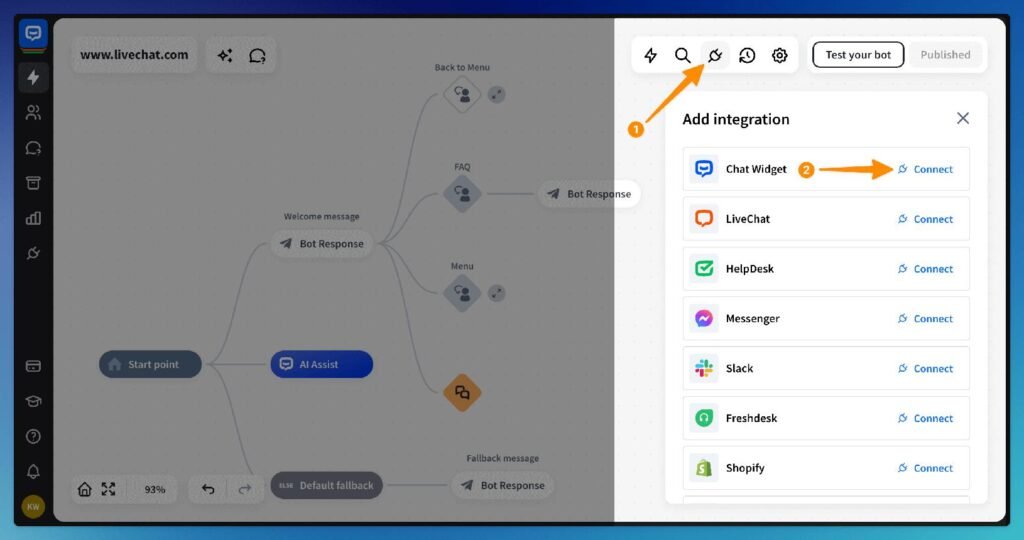

The Role of AI in Its Own Efficiency

Ironically, AI itself is being used to solve the efficiency problem.

Systems already exist that predict energy demand and autonomously adjust workloads in data centres. This is sometimes called “AI managing AI.”

Google DeepMind’s energy‑optimisation project in its UK centre reportedly cut energy costs for cooling by 40% via predictive load balancing.

How Much Energy Could Be Saved in the UK

Forecasted Improvements

Based on current research:

- Quantisation and compression → up to 40–45% less energy per model run.

- Smaller, specialised AI systems → up to 90% reduction for repetitive industrial tasks.

- Better cooling + hardware upgrades → around 30% less infrastructure energy demand.

- Renewable grid integration → nearly 70–80% emission reduction (though not total energy drop).

Put together, if the UK fully adopts these measures by 2035, analysts at University of Oxford’s Energy & AI Initiative estimate national savings of 3.5–4 terawatt‑hours (TWh) per year — enough to power about 1.1 million UK homes annually.

That’s not trivial: the same report suggests this could offset the energy load of running the entire UK’s future public‑sector AI ecosystem.

Advertisement

- With a life of 15,000 hours, the bulb will last over 13 years (based on 3 hours of use each day)

- Provides 806 lumens of light immediately, with no waiting time to warm up to full brightness

- With a colour temperature of 2700 Kelvin, the bulb provides a warm white light, creating a comfortable atmosphere in any…

A Real‑World and Cynical View

Efficiency Gains, But Demand Explosion

Even as AI gets greener, its use is expanding everywhere — from hospitals to education, transport, and media.

The UK Energy Council’s 2025 briefing concedes that while per‑unit energy use may drop, total AI‑related electricity demand could triple by 2035 if the number of models and services keeps rising.

In short, every efficiency gain risks being swallowed by consumption growth. As one analyst put it:

“AI isn’t saving energy — it’s spending it more cleverly.”

The Environmental Tipping Point

If unchecked, the UK could end up shifting from carbon waste to “digital waste” — huge data churn for marginal real‑world benefit.

Regulators are therefore considering AI energy tax credits and mandatory efficiency scorecards for both public and private models, forcing accountability into the system.

The moment efficiency stops being a selling point and becomes a legal requirement will determine whether AI truly becomes sustainable or just “less bad.”

References (UK and International Sources)

- ucl.ac.uk

- UK AI Energy Council – Powering the Future of Artificial Intelligence, 2025

- National Grid ESO – Electricity Generation Mix 2024

- ox.ac.uk

- es.catapult.org.uk

- deepmind.google

Summary

| Efficiency Measure | Expected Energy Saving | UK Real‑World Impact by 2035 |

|---|---|---|

| Model compression & quantisation | 40–45% reduction | Lower costs for major AI providers |

| Smaller task‑specific models | 70–90% savings for limited tasks | Efficient public‑service automation |

| Hardware advances (GPUs/IPUs) | 15–20% efficiency gain | Stronger UK chip sector (ARM, Graphcore) |

| Cooling & infrastructure | 30–40% reduction in data centre use | 1–2 TWh national saving |

| Renewable power mix | 70–80% fewer emissions | Greener national grid usage overall |

Final Thoughts

AI in Britain is gradually becoming cleaner, leaner and more responsible.

Through smarter algorithms, efficient chips and renewable power, the UK could save several terawatt‑hours of electricity every year by the early 2030s.

But the real challenge isn’t optimisation — it’s restraint.

If AI continues to expand across every industry and household, the savings from efficiency may simply balance, not reduce, the total energy footprint.

The technology will become less wasteful, but only society can decide whether to make it less excessive.

Find Help and Support

We have created Professional High Quality Downloadable PDF’s at great prices specifically for Personal or Business use in the UK. Which include help and advice on understanding what Artificial Intelligence is all about and how it can improve your business. Find them here.