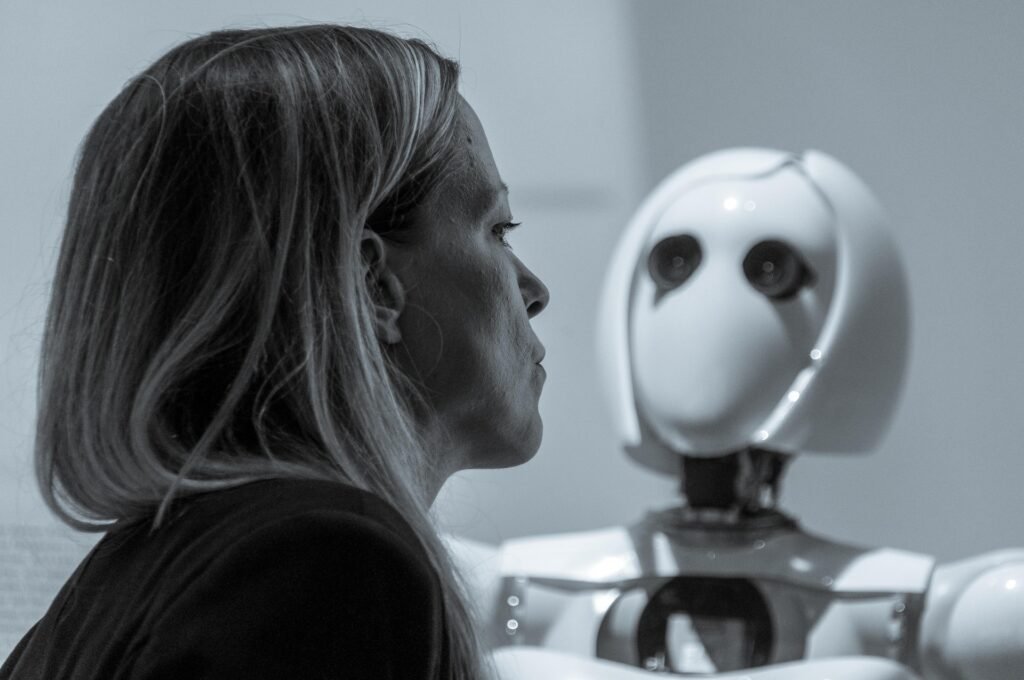

Artificial intelligence now drives vast portions of global financial trading — from portfolio optimisation to algorithmic trading and credit risk analysis. Yet, according to the UK’s National Cyber Security Centre (NCSC), the same AI revolution that allows for smarter, faster markets could also make them frighteningly fragile. One of the most pressing emerging threats is “data poisoning” – the deliberate insertion of false or corrupt data into the systems that AI depends upon.

Such manipulation could lead to catastrophic misjudgements by trading algorithms, resulting in billions being wiped off the financial markets within minutes. But how likely is such an event, and how could the markets recover if it happened?

Below, we outline three potential scenarios — best case, worst case, and most likely — drawing on expert analysis from cybersecurity and financial risk specialists.

Understanding the Threat: The Mechanics of Data Poisoning

Data poisoning involves corrupting the datasets AI models rely on to make decisions. In financial contexts, this could mean injecting falsified information into public data feeds — such as central bank indicators, company filings, commodities prices, or online news sources that sentiment-analysis AIs scan in real time.

Dr Ian Levy, Technical Director at the NCSC, warns:

“AI systems are only as trustworthy as the data they consume. If that data is manipulated — even subtly — it can cascade through automated decision-making systems at astonishing speed.”

Financial AI systems operate across thousands of automated portfolios, making markets hyper-reactive. As noted in a 2023 paper by the Bank of England’s Financial Policy Committee, “algorithmic trading can amplify systemic shocks when trading strategies react simultaneously to distorted signals.”

The Probability and Timing of an AI-Driven Market Disruption

While the NCSC has not published specific probability figures, cybersecurity analysts have offered reasoned estimates:

| Probability of significant market impact from data poisoning in the next five years | Estimated Recovery Time |

|---|---|

| Low estimate: 5–7% (with robust data validation) | 24–48 hours |

| Moderate estimate: 15–20% (if data verification remains inconsistent) | 2–7 days |

| High-risk scenario: 30%+ (if misinformation spreads before detection) | 2–4 weeks |

According to Professor Sadie Creese, cybersecurity expert at the University of Oxford, “A single manipulated data stream could be amplified across global AI systems before analysts even realise something is wrong. The synchronicity of errors in automated trading is what makes this more dangerous than traditional cyberattacks.”

Advertisement

Scenario 1: Best-Case — The Early Catch

Overview

In the best possible outcome, robust data validation and anomaly detection catch the poisoning attempt before widespread market disruption occurs.

Probability: 30–40%

Recovery Time: Less than 24 hours

Key Factors

- High data integrity standards: Financial institutions maintain diverse data sources and apply cross-verification processes.

- Proactive regulators: The Financial Conduct Authority (FCA) and the Bank of England’s Cyber Stress Testing Programme rapidly isolate affected systems.

- AI transparency tools: Algorithms are monitored through “explainable AI” frameworks, allowing quick identification of anomalies.

- Rapid human intervention: Risk managers step in to halt affected algorithms before cascading effects occur.

Outcome

Market disruption remains limited to a few sectors, possibly causing a short-lived volatility spike — similar to the 2010 “Flash Crash”, which was resolved within minutes. Confidence dips temporarily but rebounds quickly. Cybersecurity analysts trace the root cause, apply fixes, and regulators issue guidance within a day.

Preparation

Markets should:

- Maintain redundant data feeds verified by independent third parties.

- Conduct continuous AI model audits to detect unusual behaviour.

- Share real-time threat intelligence between institutions using the Financial Sector Cyber Collaboration Centre (FSCCC).

As Ciaran Martin, founding CEO of the NCSC, has previously stated:

“It’s not a question of building impregnable systems; it’s about being able to respond at machine speed when vulnerabilities are exploited.”

Scenario 2: Worst-Case — The Algorithmic Contagion

Overview

A coordinated data poisoning attack introduces subtle but plausible false financial indicators — perhaps related to inflation rates, oil inventories, or GDP forecasts — into widely used public data feeds. AI systems across the City of London and global markets react simultaneously, triggering algorithmic trades based on flawed data.

Probability: 10–15%

Recovery Time: Two to four weeks

Key Factors

- Widespread use of identical data sources: Many firms unknowingly rely on similar open datasets or APIs.

- Delayed detection: The false data appears legitimate, and humans notice only after massive trade volume discrepancies occur.

- Cascade reaction: Automated sell orders trigger further drops, causing flash crashes across sectors from commodities to currency markets.

- Media amplification: AI-generated “news” based on the same poisoned data triggers public panic.

Outcome

Within minutes, stock indices plummet by 10–15%. Global coordination between exchanges and regulators temporarily halts trading, reminiscent of the circuit breakers employed in the 2020 COVID-19 market crash.

Billions are wiped off portfolios. Confidence in AI-driven finance takes a major hit, leading to a temporary retreat to human-managed funds. Market recovery begins after central banks intervene and forensic analysts isolate the corrupt data sources.

Preparation

Markets must:

- Develop forensic data lineage tools tracing data origins in real-time.

- Implement mandatory AI risk disclosure regulations, as recommended by the Bank for International Settlements (2023).

- Stress-test algorithmic systems under “data integrity compromise” scenarios.

Dr Eleanor Fitt, senior cybersecurity researcher at the London School of Economics, notes:

“Our financial APIs are interconnected nervous systems. If you corrupt the sensory input, the body goes into spasm — and every algorithm, acting rationally on false premises, magnifies irrational outcomes.”

Scenario 3: Most Likely Case — Controlled Chaos

Overview

A partial poisoning of datasets causes selective errors across financial AI systems, resulting in volatility but not systemic failure. Markets fall moderately before regulators identify the compromised data.

Probability: 50–55%

Recovery Time: 3–5 days

Key Factors

- Fragmented exposure: Only certain trading algorithms or sectors depend on the poisoned datasets.

- Human oversight mitigates spread: Analysts and compliance officers intervene quickly once inconsistencies are detected.

- Automated suspensions: Circuit breakers prevent panic selling from escalating.

- Global cooperation: The NCSC, Bank of England, and Financial Stability Board coordinate an early investigation.

Advertisement

Outcome

Markets experience a short, sharp correction – perhaps a 3–5% drop across major indices – followed by a slow return to stability within a week. Investor confidence dips but does not collapse. Long-term, this event triggers regulatory reforms akin to those that followed the 2010 Flash Crash and 2017 WannaCry incident in the NHS.

Preparation

Financial institutions need to:

- Embed AI ethics and resilience frameworks like those promoted by the Alan Turing Institute.

- Adopt multi-source data integration models that can flag inconsistencies across feeds.

- Continue developing AI observability tools, which explain model decisions and flag abrupt shifts in behaviour.

- Require third-party cyber risk insurance that covers AI data corruption incidents.

According to Dame Wendy Hall, one of the architects of the UK’s AI strategy:

“The challenge isn’t that AI makes mistakes. It’s that AI systems make the same mistake simultaneously, globally, and faster than we can correct it.”

Advertisement

Market Preparation: Building Resilience Before the Crisis

Five Key Defensive Measures

- Data Provenance Verification

Implement cryptographic verification for financial data sources using distributed ledger technology to ensure authenticity. - AI Model Forensics

Develop tools capable of performing post-event analysis of AI decision paths, allowing regulators to trace the cause of automated trades. - Regulatory Coordination

Strengthen partnerships between the Bank of England, FCA, and NCSC for cross-sector incident response drills. - Operational Redundancy

Maintain isolated “dark sites” or backup trading infrastructure disconnected from real-time data feeds for emergency fallback. - Public Communication Strategy

Prepare rapid-response communication frameworks to manage investor panic and misinformation during cyber incidents.

Summary Table: Comparing the Scenarios

| Scenario | Probability | Market Impact | Economic Loss Estimate | Recovery Time | Key Preventative Factors |

|---|---|---|---|---|---|

| Best Case: Early Catch | 30–40% | Minor disruption | < £1 billion | < 24 hours | Active monitoring and human oversight |

| Most Likely: Controlled Chaos | 50–55% | Temporary volatility | £5–15 billion | 3–5 days | Regulatory coordination and quick isolation |

| Worst Case: Algorithmic Contagion | 10–15% | Systemic collapse | £80–100 billion | 2–4 weeks | Global data synchronisation and weak controls |

Conclusion: A Predictable Shock Waiting to Happen

The probability of a catastrophic, AI-driven financial meltdown from data poisoning remains relatively low — but the consequences are so severe that even a single occurrence could reshape global finance. The NCSC’s warnings underline that in modern markets, data integrity equals financial stability.

While the technology to prevent such events already exists, the real test lies in implementation and vigilance. AI may be fast, but as history shows, it’s human complacency — not malicious brilliance — that usually causes the biggest crashes.

In the words of Andrew Bailey, Governor of the Bank of England:

“Financial systems don’t collapse because of what we don’t know. They collapse because of what we assume is working fine until it isn’t.”

The smart money, then, is on vigilance — not just intelligence.